Vietnam has its first set of AI ethics rules

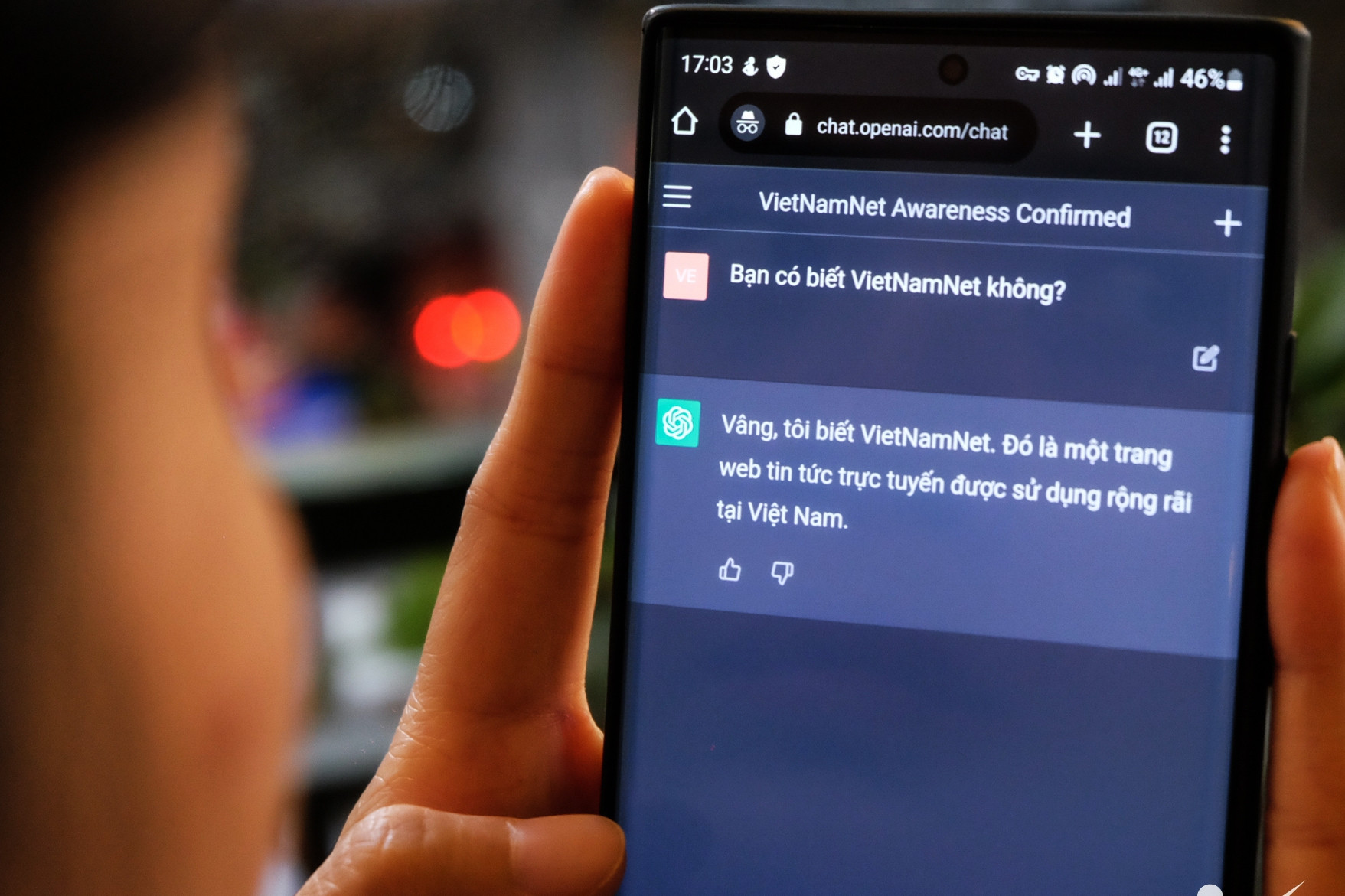

Artificial Intelligence (AI) is expected to bring great benefits to people, society and the Vietnamese economy. Along with the application of AI, Vietnam has initially researched and taken measures to minimize risks in the process of developing and using AI, balancing economic, ethical and legal factors.

This is also the reason why the Ministry of Science and Technology (MOST) issued a set of documents guiding a number of principles on responsible research and development of artificial intelligence systems.

The guidance document sets out a number of general principles and voluntary recommendations for reference and application in the process of researching, designing, developing and providing artificial intelligence systems.

Scientific and technological agencies, organizations, businesses and individuals engaged in research, design, development and provision of artificial intelligence systems are encouraged to apply this guidance document.

Accordingly, research and development of AI systems in Vietnam need to be based on the fundamental viewpoint of moving towards a human-centered society, where everyone enjoys the benefits of artificial intelligence, ensuring a reasonable balance between benefits and risks.

Research and development activities of AI systems in Vietnam aim to ensure technological neutrality. In all cases, the Ministry of Science and Technology encourages exchanges and discussions with the participation of relevant parties. Principles and guidelines will continue to be researched and updated to suit the practical situation.

The Ministry of Science and Technology said that the launch of the document aims to promote interest in researching, developing and using artificial intelligence in Vietnam in a safe and responsible manner, minimizing negative impacts on people and the community. This will increase users' and society's trust in AI and facilitate research and development of artificial intelligence in Vietnam.

Principles for Responsible AI Development

In the document, developers are encouraged to demonstrate a spirit of collaboration, promoting innovation through the connection and interaction of artificial intelligence systems. Developers must ensure transparency by controlling the input/output of AI systems and the ability to explain relevant analytics.

Developers need to pay attention to the ability to control artificial intelligence systems and assess the risks involved in advance. One way to assess risks is to conduct testing in a separate space such as a laboratory or an environment where security and safety are guaranteed before putting them into practice.

Additionally, to ensure controllability of AI systems, developers should pay attention to system monitoring (with assessment/monitoring tools or correction/update based on user feedback) and response measures (system shutdown, network shutdown, etc.) performed by humans or trusted AI systems.

Developers must ensure that the AI system will not cause harm to the life, body or property of users or third parties, including through intermediaries; must pay attention to the security of the AI system, its reliability and its ability to withstand physical attacks or accidents; must ensure that the AI system does not violate the privacy of users or third parties. The privacy rights mentioned in the principle include the right to privacy of space (peace in personal life), the right to privacy of information (personal data) and the confidentiality of communications.

When developing artificial intelligence systems, developers must take special care to respect human rights and dignity. To the extent possible, depending on the characteristics of the applied technology, developers must take measures to ensure that they do not cause discrimination or unfairness due to bias (prejudice) in the training data. Not only that, developers must exercise their responsibility to stakeholders and support users.

Source: https://vietnamnet.vn/viet-nam-ra-nguyen-tac-ai-khong-duoc-gay-ton-hai-tinh-mang-tai-san-nguoi-dung-2292026.html

![[Photo] Prime Minister Pham Minh Chinh chairs meeting after US announces reciprocal tariffs](https://vstatic.vietnam.vn/vietnam/resource/IMAGE/2025/4/3/ee90a2786c0a45d7868de039cef4a712)

![[Photo] Prime Minister Pham Minh Chinh chairs the first meeting of the Steering Committee on Regional and International Financial Centers](https://vstatic.vietnam.vn/vietnam/resource/IMAGE/2025/4/3/47dc687989d4479d95a1dce4466edd32)

![[Photo] General Secretary To Lam receives Japanese Ambassador to Vietnam Ito Naoki](https://vstatic.vietnam.vn/vietnam/resource/IMAGE/2025/4/3/3a5d233bc09d4928ac9bfed97674be98)

![[Photo] Ho Chi Minh City speeds up sidewalk repair work before April 30 holiday](https://vstatic.vietnam.vn/vietnam/resource/IMAGE/2025/4/3/17f78833a36f4ba5a9bae215703da710)

![[Photo] A brief moment of rest for the rescue force of the Vietnam People's Army](https://vstatic.vietnam.vn/vietnam/resource/IMAGE/2025/4/3/a2c91fa05dc04293a4b64cfd27ed4dbe)

Comment (0)